Best self-hosted AI models for regulated industries: Financial services, and government

Daniel Whitenack

·

13 minute read

Daniel Whitenack

·

13 minute read

Updated May 11, 2026

TL;DR: Selecting a high-performing open-source AI model is only the first step for regulated enterprises. Llama 3.x, Mistral, Qwen 2.5, and Gemma 2 each support enterprise self-hosted deployment. Financial services, and government workloads require inference, governance logic, and audit logs to remain entirely inside your own infrastructure. Deploying these models behind a self-hosted sovereign AI control plane, with automated AIBOM generation and NIST AI RMF-aligned policy enforcement, determines whether your AI survives its first compliance review, not which model scored highest on MMLU (Massive Multitask Language Understanding).

Most engineering leaders evaluating self-hosted AI models obsess over inference latency and VRAM budgets while ignoring the system-level governance that determines whether their models survive a security sign-off. An open-source AI model running on your own hardware is not inherently secure.

Without a control plane enforcing policies at the API level, you've simply moved your compliance gap inside your own network perimeter.

This guide evaluates the top self-hostable models for regulated use cases and details the system-level controls required to keep them auditable, so you can build a defensible case for your CISO and GRC team.

Gaining control: self-hosted AI models for regulated firms

Self-hosted AI models run entirely within your own infrastructure, whether on-premises, in a cloud VPC, or air-gapped. Every query, document, and model output remains inside your own environment. When you send patient data to an external AI API, you introduce a third party into that chain, and no business associate agreement eliminates your residency and access control obligations.

Data sovereignty and compliance requirements

The regulatory case for self-hosting is direct. Under ITAR, controlled technical data may not be made available to foreign persons without DDTC authorization, and infrastructure accessible to foreign nationals or foreign-hosted services fails that test. The FTC Safeguards Rule (GLBA) explicitly requires financial institutions to ensure third-party service providers maintain adequate data protection controls. None of these requirements allow regulated data to route through an external LLM endpoint. Self-hosting is the architectural prerequisite.

Mitigating external AI data egress

When a developer sends a document to an external API, three things happen outside your control: the payload transits vendor infrastructure, processing occurs on vendor hardware, and the vendor's logging and retention policies govern what the system stores about that interaction.

For workloads involving ePHI, CUI, ITAR-controlled technical data, or financial PII, each of those events creates a potential regulatory exposure. Self-hosting eliminates all three by keeping the inference path entirely within your own network perimeter. The Prediction Guard EP12: Self-Hosted Sovereignty: Own Your AI with Prediction Guard episode details the architecture.

AI policy enforcement and audit

A written AI governance policy is not the same as system-level enforcement. When your developers must manually follow guidelines about acceptable AI inputs (including prompts, retrieved documents, database results, and tool calls) or outputs requiring review, your compliance posture depends on human consistency under delivery pressure. That gap surfaces in audit cycles.

System-level enforcement means every model interaction is governed at the API level, before and after inference, regardless of which developer sent the request or which framework they used. Organizations address this enforcement gap through several approaches, including open-source policy controls such as Open Policy Agent, SIEM-native controls, or purpose-built AI control planes.

Prediction Guard (the publisher of this guide) deploys this enforcement as a purpose-built sovereign control plane inside your own infrastructure for self-hosted deployments, generating structured audit logs stored within your own environment. The self-hosted deployment documentation details the architecture.

Evaluation criteria for self-hosted AI in regulated environments

Before comparing specific models, you need a consistent evaluation framework, because the criteria that matter for regulated deployment differ significantly from academic benchmarks.

Performance benchmarking and factual consistency

Benchmark scores like MMLU measure knowledge and reasoning across diverse domains, including STEM, humanities, and social sciences, but regulated use cases demand factual consistency checking on domain-specific outputs.

Factual consistency checking is probabilistic, not deterministic: the same input can produce different outputs across runs, and your QA process needs to account for that. Evaluate models against your actual task distribution, such as clinical note summarization, contract clause extraction, or compliance report generation, not leaderboard rankings.

The Prediction Guard model performance and security episode covers this evaluation methodology in detail.

Inference latency and memory efficiency

Q4_K_M is a 4-bit quantization method that reduces model weight precision to lower VRAM requirements while preserving more accuracy than simpler 4-bit schemes. It is the most commonly used quantization format for production self-hosted deployments. At Q4_K_M quantization, VRAM requirements by model scale break down as follows:

|

Model scale |

VRAM required (Q4_K_M) |

|---|---|

|

8B models |

~6 GB |

|

14B models |

~10 GB |

|

32B models |

~20 to 22 GB |

|

70B models |

~43 GB |

Plan GPU allocation around peak concurrent load, not average load, and size your inference serving layer accordingly.

Open source AI licensing for regulated use

Licensing is a legal review item, not an engineering checkbox. Apache 2.0 permits unrestricted commercial use, modification, and distribution without MAU thresholds or competitive restrictions. Llama 3's Meta Community License includes a 700M monthly active user (MAU) threshold above which the free license expires automatically and a competitor restriction that blocks entire industries.

The Acceptable Use Policy prohibits use for materials or activities subject to ITAR outright: this is a ban, not a review obligation. For organizations deploying Llama 3 in commercial products, legal review is recommended to assess the 700M MAU threshold and a competitor restriction that prohibits using Llama outputs to train or improve other LLMs.

AI vendor lifecycle and support

Governance tied to a single cloud provider's console cannot be migrated when you change vendors. Evaluate models and governance tooling together: can you replace one model with another without rebuilding your policy enforcement layer? Hardware and infrastructure agnosticism determines whether your governance configuration survives a model swap.

Best self-hosted AI models for regulated use cases

The following models represent the strongest options for enterprise self-hosted deployment in regulated industries as of 2026.

Table 1: Model specifications for top self-hosted AI models in regulated enterprises

|

Model |

Parameters |

Context window |

License |

|---|---|---|---|

|

Llama 3.1 8B |

8B |

128K tokens |

Meta Community License |

|

Llama 3.1 70B |

~70B |

128K tokens |

Meta Community License |

|

Mistral 7B v0.2 |

7B |

32K tokens |

Apache 2.0 |

|

Mixtral 8x7B |

~46.7B (MoE) |

32K tokens |

Apache 2.0 |

|

Qwen 2.5 7B / 32B |

7B, 32B |

128K tokens |

Apache 2.0 |

|

Qwen 2.5 72B |

72B |

128K tokens |

Qwen License |

|

Gemma 2 2B / 9B / 27B |

2B, 9B, 27B |

8K tokens |

Gemma Terms of Use |

Table 2: Hardware requirements at Q4_K_M quantization

|

Model |

Min VRAM (Q4) |

|---|---|

|

Llama 3.1 8B |

~6 GB |

|

Llama 3.1 70B |

~43 GB |

|

Mistral 7B v0.2 |

~4 to 5 GB |

|

Mixtral 8x7B |

~25 to 30 GB |

|

Qwen 2.5 32B |

~20 to 22 GB |

|

Gemma 2 9B |

~5.7 GB |

Llama 3.x: self-hosted architecture

Llama 3.1 at 70B delivers competitive reasoning and instruction-following for complex regulated tasks, including multi-step compliance review and policy document analysis. Its 128K context window accommodates long-form financial agreements and clinical records without chunking. The Meta Community License requires legal review for any organization deploying Llama in a commercial product, particularly if that product serves a large external user base.

Flag the MAU clause and competitor restriction to your legal team before committing to production. For production inference at FP16 (unquantized weights), plan for four NVIDIA A100 80GB GPUs to support the full 128K context window. Two A100 80GB GPUs can serve FP16 inference with a reduced context window. Applying AWQ or GPTQ quantization further reduces VRAM, allowing two A100 80GB GPUs to serve a wider context window. Both quantization methods come with a modest reduction in output accuracy compared to full-precision inference.

For regulated workloads where factual consistency is critical, validate quantized model outputs against your domain-specific task distribution before committing to a two-GPU configuration in production.

Self-hosting Mistral and Mixtral AI models

Mistral 7B v0.2 and Mixtral 8x7B both ship under Apache 2.0, removing the licensing complexity present in Llama 3. Mistral 7B v0.2's 4 to 5 GB minimum VRAM requirement at Q4 quantization makes it a hardware-efficient option for edge or distributed deployment scenarios, such as field operations in manufacturing or logistics.

Mixtral 8x7B's mixture-of-experts architecture delivers high throughput on batch workloads by activating only a subset of its ~46.7B parameters per token, which is relevant for financial services teams processing large document volumes. At Q4 quantization, Mixtral 8x7B requires approximately 25 GB VRAM, though some configurations may need up to 28 to 30 GB for full inference capability. The air-gapped deployment episode covers Kubernetes-native deployment for these models.

Evaluating Qwen 2.5 for secure AI

Qwen 2.5 trains on Alibaba's latest large-scale dataset, covering up to 18 trillion tokens, and supports up to 128K context with multilingual capability across 29 or more languages. For multinational financial services firms or global organizations handling compliance documentation in multiple languages, Qwen 2.5's multilingual performance makes it a strong candidate.

The 0.5B, 1.5B, 7B, 14B, and 32B variants are generally available under Apache 2.0. Confirm current licensing terms against the Qwen model cards before committing to production, as terms may change across releases. The 72B variant operates under the Qwen License, which imposes a 100M MAU threshold and requires the same legal review as Llama 3 before production deployment.

Self-hosting Gemma 2 for compliance

Gemma 2 is available in 2B, 9B, and 27B parameter sizes, each operating under Google's Gemma Terms of Use. The 9B variant's sub-6 GB VRAM footprint at Q4 quantization makes it accessible for lightweight classification tasks, including PII entity tagging, document routing, and initial compliance flag triage before escalation to a larger model. Gemma 2 also performs well on mathematical reasoning and coding tasks, so teams evaluating it purely for classification should also benchmark it on their domain-specific reasoning requirements.

Enterprise self-hosted AI for regulated use

The most defensible architecture for regulated enterprises doesn't pick one model. It composes multiple models into a governed system under a single OpenAI-compatible API. Prediction Guard's LangChain integration allows existing codebases to route to self-hosted models without rebuilding the toolchain:

from langchain_predictionguard import ChatPredictionGuard

# Routes to your self-hosted model; control plane and inference stay inside your infrastructure

llm = ChatPredictionGuard(

model="Hermes-3-Llama-3.1-70B", # fine-tuned variant of Llama 3.1 70B, available via Prediction Guard control plane

predictionguard_api_key="YOUR_SELF_HOSTED_ENDPOINT_KEY"

)

response = llm.invoke("Summarize the compliance obligations in this contract.")

print(response.content)The AI governance policies you define in the Prediction Guard Admin console govern every call through this endpoint, and the control plane enforces them regardless of which LangChain chain or agent triggered the request. The accessing LLMs documentation covers the full endpoint configuration.

Best self-hosted AI for financial services

Financial services workloads combine high data sensitivity with strict audit requirements, making the choice of model and governance architecture a compliance decision as much as a technical one.

AI-powered financial data extraction

Contract analysis and earnings report parsing are high-value AI use cases in financial services because they convert unstructured legal and financial text into structured data for downstream systems. Schema-constrained output enforcement, meaning outputs you constrain to a predefined JSON schema, is what makes these use cases production-viable for downstream financial pipelines. While the model's reasoning process remains probabilistic, schema validation enforces structural correctness by construction, making syntax errors impossible.

Semantic validation is still required to verify the accuracy of the content: a structurally valid JSON response can still contain a hallucinated value or incorrect field. Mistral 7B v0.2 and Mixtral 8x7B both perform well on structured extraction tasks at VRAM budgets typical for mid-market financial services infrastructure. The Prediction Guard document processing episode covers JSON schema enforcement for financial extraction pipelines.

Implementing AI risk controls

OWASP LLM10:2025 (Unbounded Consumption) identifies resource exhaustion as a direct AI risk, and usage-based rate limiting and budget controls implemented at the control plane level prevent unbounded consumption conditions on shared inference infrastructure. For financial services teams, this also means enforcing which models each internal team can query, at what volume, and with what output constraints, without requiring developer-side implementation of each policy.

The harmonizing AI tools blog addresses unified access management across fragmented AI tool ecosystems. All of these workflows process non-public financial information that cannot transit external APIs under the FTC Safeguards Rule and GLBA, and self-hosted deployment keeps this data within the organization's defined perimeter.

Auditable AI for government use cases

Government and defense-adjacent workloads impose the strictest data residency and access control requirements of any regulated sector, making infrastructure architecture and compliance framework alignment non-negotiable before any model goes to production.

Government air-gapped AI hosting

Air-gapped deployment means running AI on infrastructure with no physical or logical connection to any external network. Data enters and exits only through controlled physical media transfer. For defense contractors and federal agencies, this is a hard architectural requirement for workloads involving CUI, UCNI, or ITAR-controlled technical data.

Organizations with stringent security and compliance requirements often find that hosting their own services provides the control necessary to meet their operational needs. The air-gapped deployment episode covers the Kubernetes-native architecture for these environments.

CMMC and ITAR for self-hosted AI

ITAR controls the export of defense-related articles and services, and technical data subject to ITAR cannot be stored on or transmitted through systems accessible to foreign nationals or foreign-hosted infrastructure. CMMC Level 2 aligns with NIST SP 800-171 controls for CUI protection, while Level 3 adds enhanced protections drawn from NIST SP 800-172 for high-risk environments.

Self-hosted deployment in an air-gapped or VPC environment supports meeting these access control and audit requirements, whereas an external API endpoint introduces third-party access that conflicts with CUI handling obligations.

NIST AI RMF for secure government AI

The NIST AI Risk Management Framework organizes AI governance across four functions, and each maps directly to self-hosted control plane capabilities:

Govern: Policy enforcement and access controls, configured once in the Admin console and enforced across every model interaction.

Map: AIBOM generation, producing a structured inventory of every model, tool, dataset, and dependency for supply chain transparency.

Measure: Structured audit logging and usage monitoring within the customer's own infrastructure, generating the evidence base for regulatory review.

Manage: Centralized rate limiting, incident response integration via SIEM/SOAR forwarding, and runtime integrity monitoring to address identified risks.

The Prediction Guard OWASP guidance implementation episode maps these controls to specific OWASP LLM Top Ten items.

How federal agencies deploy self-hosted AI

Noblis, a Prediction Guard investor and customer, illustrates the behind-the-firewall deployment pattern for mission-critical environments. Bill Streilein, CTO of Noblis, describes the requirement directly:

"Prediction Guard provides a solution that enables them to host LLMs and generative AI behind the firewall, on their own premises." Bill Streilein CTO, Noblis

Implementing governance controls for self-hosted AI

Self-hosting a model inside your perimeter is necessary but not sufficient: the governance controls that sit above the model determine whether your deployment can withstand a compliance review.

Architecture comparison: External AI gateway vs. sovereign control plane

|

Component |

External AI gateway |

Self-hosted sovereign control plane |

|---|---|---|

|

Query routing |

Transits vendor cloud infrastructure |

Stays inside customer network perimeter |

|

Inference processing |

Occurs on vendor hardware |

Occurs on customer-owned or customer-controlled hardware |

|

Governance logic |

Enforced on vendor systems |

Enforced inside customer infrastructure |

|

Audit logs |

Stored under vendor retention policies |

Stored within customer environment |

|

Data sovereignty |

Dependent on vendor BAA/DPA |

Structural: no third-party data path exists |

Sovereign AI control plane policy enforcement

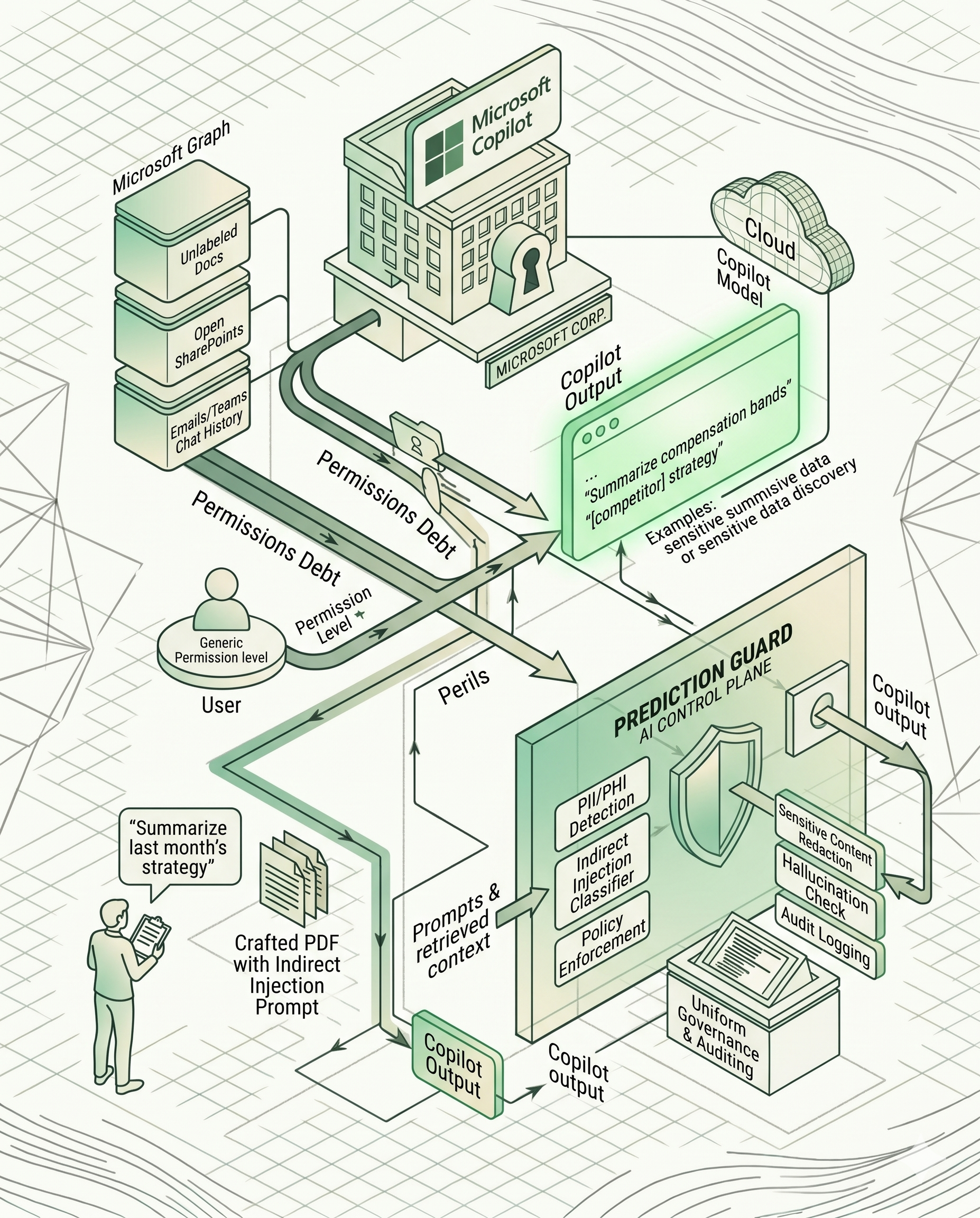

A control plane deployed inside your infrastructure generates and retains every governance record within your own environment, making your audit posture independent of any vendor's access or availability. External gateways process governance logic and store audit logs on vendor infrastructure outside your control, which means the evidence you'd present to an auditor depends on a third party's data retention policies. The Microsoft Copilot security risks blog explains this architecture gap in detail.

Auditable AI usage logs

Native SIEM/SOAR integration into Splunk, Datadog, and generic syslog forwarders means AI security events reach the systems your security team already uses. Audit log retention satisfies compliance requirements for evidence preservation. SIEM forwarding enables operational security response. You need both for a complete governance posture, and they are distinct capabilities, not interchangeable ones. The agentic AI automation episode covers threat detection and response in governed agentic workflows.

Governing your AI model inventory

Regulatory pressure from frameworks including the NIST AI RMF and the EU AI Act requires organizations to maintain detailed records of AI components, yet governance cataloguing frequently lags behind the pace of engineering deployment, leaving compliance teams without a complete AI asset inventory when it is needed. An AIBOM documents models, datasets, code, hardware, data processing, and governance configuration in machine-readable format.

CycloneDX, published as ECMA-424, is the standard format for this export, and it lets organizations assess risks related to bias, data integrity, and model security while keeping AI systems aligned with regulatory standards. Prediction Guard generates AIBOMs in CycloneDX format covering every model, tool, dataset, and dependency in each AI system.

NIST and OWASP-aligned AI control

Explicit OWASP AI Top Ten coverage at the control plane level addresses the vulnerabilities most likely to surface in regulated deployments:

LLM01 (Prompt Injection): Input validation and prompt injection detection at the API level, documented in the injection prevention documentation.

LLM02:2025 (Sensitive Information Disclosure): PII detection with configurable redact, mask, or replace actions before data reaches the model or audit logs.

LLM10:2025 (Unbounded Consumption): Usage-based rate limiting and budget controls enforced at the control plane, not reliant on developer-side implementation.

The OWASP AI Top Ten provides the complete item-by-item framework reference for compliance documentation.

Addressing key self-hosted AI implementation challenges

Selecting the right model is only part of the work. The sections below address the infrastructure, performance monitoring, and compliance framework alignment decisions that determine whether a self-hosted deployment holds up under operational and regulatory scrutiny.

Architecting self-hosted AI deployments

A production-grade self-hosted deployment typically includes a serving infrastructure for model inference (which can be a Kubernetes cluster with GPU device plugins, Docker containers on bare metal, a VPC-based setup using first-party cloud services, or a DIY on-premises configuration), an inference serving layer such as vLLM or Hugging Face Text Generation Inference, a persistent storage layer for model weights and audit logs, and network isolation via VPC or air-gapped configuration.

The control plane sits between your application layer and inference servers, enforcing AI governance policies on every request. Prediction Guard is hardware and infrastructure-agnostic across NVIDIA GPU hardware, which means your governance configuration doesn't need rebuilding when you change hardware generations or cloud providers. The golden path for AI blog covers production deployment architecture patterns.

Benchmarking self-hosted AI performance

Model drift and performance degradation occur when the distribution of real-world inputs diverges from the model's training data. Track inference latency, output factual consistency scores, and task-specific accuracy metrics against a baseline you establish at deployment. Structured audit logs from the control plane provide the raw data for this monitoring, and the LLM options documentation covers the model options available through the Prediction Guard control plane.

Essential AI compliance frameworks

Regulated enterprises deploying self-hosted AI need alignment across multiple frameworks: NIST AI RMF, OWASP LLM Top Ten, OWASP Agentic AI Top Ten, and sector-specific regulations like HIPAA, ITAR, GLBA, or CMMC. Building this alignment manually from spreadsheets doesn't scale as your AI asset inventory grows. Codifying it into the control plane means enforcement happens automatically, audit logs are generated without human action, and the AIBOM provides the inventory compliance teams need for a regulator's asset inquiry.

Book a deployment scoping call to assess whether self-hosted deployment fits your infrastructure and compliance requirements.

FAQs

What is the minimum VRAM required to self-host Llama 3.1 70B?

At Q4_K_M quantization, Llama 3.1 70B requires approximately 43 GB of VRAM. Full-precision, unquantized FP16 inference requires four NVIDIA A100 80GB GPUs for full context window headroom. Two A100 80GB GPUs can serve FP16 inference with a reduced context window. Two A100 80GB GPUs can also serve the model at reduced VRAM cost when you apply AWQ or GPTQ quantization. Both methods reduce VRAM requirements by further compressing model weights, with a modest accuracy tradeoff compared to full-precision inference. Validate output quality against your specific regulated task distribution before deploying a two-GPU configuration in production.

Does Llama 3's license allow commercial use in regulated industries?

Yes, but with restrictions. The Meta Community License includes a 700M MAU threshold above which the free license expires and a competitor restriction, both of which require legal review before production deployment.

What is an AIBOM and why does it matter for regulated AI?

An AIBOM is a machine-readable inventory of every model, dataset, tool, and dependency in an AI system, exported in CycloneDX format. It satisfies the NIST AI RMF Map function requirement for supply chain transparency and gives compliance teams a structured asset register for regulatory inquiries.

Which Qwen 2.5 model sizes use Apache 2.0 versus the Qwen License?

The 0.5B, 1.5B, 7B, 14B, and 32B variants are generally available under Apache 2.0, though licensing terms should be confirmed against the current Qwen model cards before production deployment. The 72B variant uses the Qwen License, which imposes a 100M MAU threshold and requires legal review before commercial deployment.

Which open-source AI license is most straightforward for commercial enterprise deployment?

Apache 2.0 covers Mistral 7B v0.2, Mixtral 8x7B, and the majority of Qwen 2.5 variants. It permits unrestricted commercial use, modification, and distribution without MAU thresholds or competitor restrictions, making it the clearest licensing path for regulated enterprise deployment.

Key terms glossary

AIBOM (AI Bill of Materials): A machine-readable inventory of every model, dataset, tool, and dependency in an AI system, typically exported in CycloneDX format for supply chain transparency and regulatory audit purposes.

Air-gapped deployment: An infrastructure configuration with no physical or logical connection to any external network, where data transfer occurs only through controlled physical media. Required for workloads involving ITAR-controlled data or classified government information.

CycloneDX: An Ecma International standard (ECMA-424) for software and AI bill of materials, used to document model provenance, dataset lineage, and dependency metadata in a machine-readable format.

NIST AI RMF: The NIST AI Risk Management Framework, organizing AI governance across four functions (Govern, Map, Measure, Manage) to help organizations identify, assess, and respond to AI-related risks.

OWASP AI Top Ten: A ranked list of the ten most critical security vulnerabilities in AI applications, including prompt injection (LLM01:2025), sensitive information disclosure (LLM02:2025), and unbounded consumption (LLM10:2025).

Sovereign AI control plane: A governance architecture deployed entirely within the customer's own infrastructure, where policy enforcement, audit logging, and governance logic remain inside the customer's perimeter for every model interaction.

Factual consistency checking: A probabilistic evaluation of LLM outputs against a reference document or ground truth source to detect hallucinations. Not deterministic: the same input can produce different outputs across runs.

Schema-constrained output enforcement: A control that constrains AI outputs to a predefined JSON schema, ensuring downstream financial or compliance pipelines receive machine-parseable data. The model's reasoning process remains probabilistic, and schema validation enforces structural correctness only: a syntactically valid output can still contain semantically inaccurate values, so semantic validation remains necessary.